4 Problems of Artificial Intelligence that Still Need Fixing

AI requires huge amounts of data to work correctly

In 2015 Google and Microsoft showed their image-recognition algorithms which had learned to best humans in identifying objects in images. However, outstripping humans required both algorithms to scrutinize 1.2 million images. “A child can learn to recognize a new kind of object or animal using only one example,” writes Tom Simonite, editor of MIT Technology Review.

Neil Lawrence, Professor of Machine Learning at the University of Sheffield and Director of Machine Learning at Amazon, claims that AI needs hundreds of thousands times more data than a human being to learn to recognize images.

“If you look at all the applications domains were deep learning is successful you’ll see they’re domains where we can acquire a lot of data,” says Lawrence. While providing an example of image recognition he stresses that industry giants like Facebook and Google have access to “mountains of data” while working with the technology.

Difficulties are caused by the fact that in many areas it is hard to gather big amounts of data. For instance, machine vision technology is used in medicine to detect tumors on x-rays, but in some situations, it is impossible to find enough digitized information. Lawrence believes collecting more data is not the solution. The only way out is using algorithms that require fewer resources.

AI is not able to multitask

AI’s algorithms today can solve one specific problem at a time. As Raia Hadsell, Google DeepMind research scientist, puts it, AI can be trained to recognize cats or play Atari games, but so far there are no algorithms that can do these things simultaneously. Hadsell claims neural networks are not able to expand their set of skills indefinitely. Constant changing of algorithms make them fail to respond to modification at some point because of their size.

In DeepMind they call it “catastrophic forgetting”. If, for example, developers train AI to recognize human faces but then want to switch to cows, AI will forget about facial recognition to free memory for new information. Neural networks today use millions of mathematical equations to model patterns, and the equations are so interconnected and interdependent that they fail to operate at the smallest alterations of the task they are to solve.

Nobody quite understands how exactly AI functions

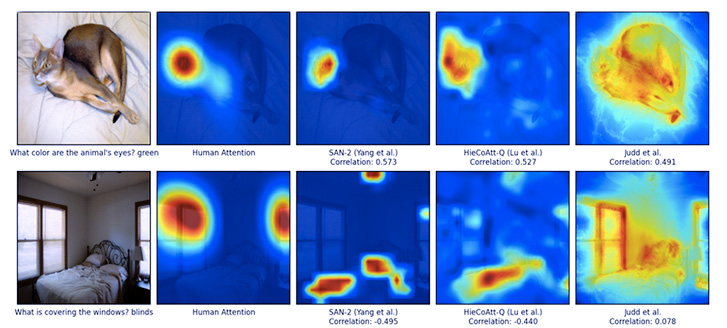

In 2016 researchers at Virginia Polytechnic Institute conducted an experiment to determine what area AI “looks at” to solve a task. They showed AI a photo of a bedroom asking “What is covering the windows?” It turned out that instead of focusing on the windows, AI first scanned the floor. Then the neural network saw the bed and answered: “blinds are covering the windows”.

AI’s answer was correct but only because it did not have enough data. The neural network recognized the bed and therefore identified that it is a bedroom in the photo, and using this information concluded that the windows are covered by blinds. “It is logical, but stupid at the same time. Many bedrooms don’t have blinds!” commented James Vincent, editor of The Verge.

Results of the research conducted in Virginia Polytechnic Institute

The thing is that it is impossible to look “inside” a neural network to understand how it works. Network’s reasoning is based on the behavior of thousands of artificial neurons that comprise hundreds of its layers.

Starting from 2018 the European Union may oblige companies to explain to their customers the decisions made by the neural networks companies use. “This might be impossible, even for systems that seem relatively simple on the surface, such as the apps and websites that use deep learning to serve ads or recommend songs. The computers that run those services have programmed themselves, and they have done it in ways we cannot understand. Even the engineers who build these apps cannot fully explain their behavior,” notes Will Knight, Senior Editor of MIT Technology Review.

AI’s capabilities arouse concern

The potential power of AI is a point of concern for many entrepreneurs and scientists nowadays. Back in 2014 Elon Musk, CEO of Tesla and SpaceX, called AI “our biggest existential threat”: “With artificial intelligence we are summoning the demon. In all those stories where there’s the guy with the pentagram and the holy water, it’s like – yeah, he’s sure he can control the demon. Doesn’t work out,” said Musk.

Since then his opinion has not changed and even been supported by his deeds. At the end of 2015 Musk and Sam Altman, CEO of Y Combinator, created OpenAI, a non-profit organization, with the purpose of finding the ways in which AI can benefit humanity.

Scientist Stephen Hawking voiced his concerns about AI’s possible harm. In his opinion, further development of the technology can have positive as well as negative consequences, such as autonomous weapons, economic disruption and a conflict between machines and humanity. “In short, the rise of powerful AI will be either the best, or the worst thing, ever to happen to humanity,” said Hawking.

Among those concerned about AI’s potential harm are co-founder of Microsoft Bill Gates, inventor of Worldwide Web Tim Berners-Lee, and co-founder of Apple Steve Wozniak. “If we build these devices to take care of everything for us, eventually they’ll think faster than us and they’ll get rid of the slow humans to run companies more efficiently,” said Wozniak.

![Challenges in Fine-Tuning Computer Vision Models [thumbnail]](/uploads/media/thumbnail-280x222-7-common-pitfalls-of-fine-tuning-computer-vision-models.jpg)

![Pros and Cons of CEA [thumbnail]](/uploads/media/thumbnail-280x222-industrial-scale-of-controlled-agriEnvironment.webp)

![Power Platform for Manufacturing [Thumbnail]](/uploads/media/thumbnail-280x222-power-platform-for-manufacturing-companies-key-use-cases.webp)

![Agriculture Robotics Trends [Thumbnail]](/uploads/media/thumbnail-280x222-what-agricultural-robotics-trends-you-should-be-adopting-and-why.webp)

![ServiceNow & Generative AI [thumbnail]](/uploads/media/thumbnail-280x222-servicenow-and-ai.webp)

![Data Analytics and AI Use Cases in Finance [Thumbnail]](/uploads/media/thumbnail-280x222-combining-data-analytics-and-ai-in-finance-benefits-and-use-cases.webp)

![AI in Telecom [Thumbnail]](/uploads/media/thumbnail-280x222-ai-in-telecom-network-optimization.webp)