Generative AI and Power BI: A Powerful Duo for Data Analysis

What Is Generative AI?

Generative AI is an umbrella term for for a range of powerful models, capable of producing original outputs, based on the provided information. Hence the moniker “generative”. This “generative” nature allows it to create anything from fresh texts (like ChatGPT), never-before-seen visuals (like DALL·E2), and even new audio pieces (like AudioLM) or code snippets (like GitHub Copilot).

In the simplest terms, generative AI is trained on massive data samples. The goal of the training is to teach the model to classify various inputs based on the labels provided by researchers: a model learns that an apple is “red”, “round”, “juicy”, etc. The scale of data sets needs to be substantial. For example, GPT-3 devoured 45 terabytes of text data and 175 billion parameters – and it is not even the largest model there is.

Neural networks lie behind the impressive capabilities of modern generative AI models. This machine learning technique mimics the human brain's structure by utilizing interconnected processing units (neurons) arranged in layers. Essentially, these neural networks give generative AI models the “intelligence” to produce creative outputs, make data-backed decisions, and perform a variety of other tasks.

Benefits of Generative AI Models

The big boon of generative AI models is their user-friendliness. Unlike traditional programming that requires complex code, generative AI can be instructed using natural language – simply type in your request!

This accessibility, combined with the lightning-fast generation of outputs, translates to a quantum boost in worker productivity and delivers substantial economic benefits.

Efficiency Augmentation

Generative AI models can take over a number of routine, low-value tasks. For example, in the business intelligence domain, generative AI models can help with data querying, analysis, and visualization. In software engineering, generative tools can help with code reviews and refactoring, plus a wide range of infrastructure management tasks.

McKinsey estimates that Gen AI can automate 60%-70% of repetitive tasks currently consuming employees' time.

Accessibility for All

Generative AI can also make data analytics more accessible to a wider audience by bridging the data skills gap. Users with any background can interact with data using natural language commands to receive personalized results. Likewise, generative AI models can be tasked to build data visualizations and dashboards with clear commentary on the data, sources, and statistical methods used. By utilizing explainability features (XAI), users can understand the model’s reasoning to mitigate the risks of biases or inconsistencies.

Algorithmic Creativity

Trained on massive datasets, generative AI excels at identifying unique patterns and correlations that humans might miss. This creative “shtick” can enhance the innovative capabilities of your business. For example, Simcenter's generative AI has a generative AI tool that helps discover the optimal system architectures. The model scans through thousands of possibilities based on the input product characteristics and then suggests the best-fit system architecture pattern.

Power BI AI Capabilities: Overview

Microsoft has a well-established reputation for innovation in AI. Their Cloud AI developer services have consistently ranked among the best by Gartner's Magic Quadrant for five years running. These services empower developers to build, deploy, and manage custom AI models in production environments. Here you can read in detail about practical examples of how AI in Power BI is being leveraged across various industries.

Since the beginning of 2023, Microsoft has been integrating a new “Copilot” mode across its products, including Bing, Microsoft 365, and most importantly for data analysis, Power BI.

The following section will explore four key options for deploying AI within Power BI for enhanced data exploration and insights.

Copilot

Power BI employs Microsoft's advanced generative AI model to give users a seamless, natural language interface. Introduced in May 2023, Copilot within Power BI lets users interact with data using spoken commands for tasks like data retrieval, editing Data Analysis Expressions (DAX) calculations, and even report or visual dashboard generation. Beyond data manipulation, Copilot acts as a conversational guide, offering insightful answers to user queries and generating data summaries that enhance data storytelling.

ChatGPT

Developers can also make a direct integration between ChatGPT and Power BI to get assistance from the famous OpenAI model. Firstly, ChatGPT can help construct complex calculations and advanced queries within Power BI models. Secondly, its analytical capabilities can be leveraged to troubleshoot errors encountered during development. Finally, ChatGPT holds promise for optimizing report generation by automating repetitive tasks and refining report structures.

Power Query M functions offer an efficient language for data manipulation within Power BI. Tools like ChatGPT could potentially provide "Power Query M Function support" in the form of:

- error correction

- code generation based on user instructions

- development through natural language code generation.

Azure Cognitive Services

For advanced AI capabilities within Power BI, developers can leverage Microsoft Azure Cognitive Services – pre-trained, customizable AI models, packaged as application programming interfaces (APIs). Deployable to any cloud or edge application with containers, Cognitive Services provide advanced analytical capabilities to enhance applications.

Power BI offers an option to enrich existing dataflows with available Cognitive Services models via a graphical interface. Current Power BI integration with Cognitive Services supports:

- Automatic language detection and text recognition in 120 languages

- Keywords and phrases extraction from unstructured texts

- Sentiment analysis for smaller text documents

- Image tagging, capable of identifying over 2,000 objects.

Azure Cognitive Services help data analysts handle large datasets more effectively by reducing time spent on data cleansing, labeling, and preparation for self-service analytics usage.

Automated Machine Learning

Power BI also lets you build fully custom machine learning models to run against your data using automated machine learning (AutoML) tools from Azure Machine Learning service. AutoML provides tools for deploying supervised learning techniques such as binary prediction, classification, and regression models.

You do not need an Azure subscription to use AutoML in Power BI since the tool entirely managed the process of training and hosting ML models.

Generative AI Use Cases in Data Analytics and BI

Whether you are using Power BI or other self-service BI tools, generative AI models have a lot to offer in terms of streamlined workflows and superpowered analytical capabilities. Here is how you can uncover value buried beneath your data.

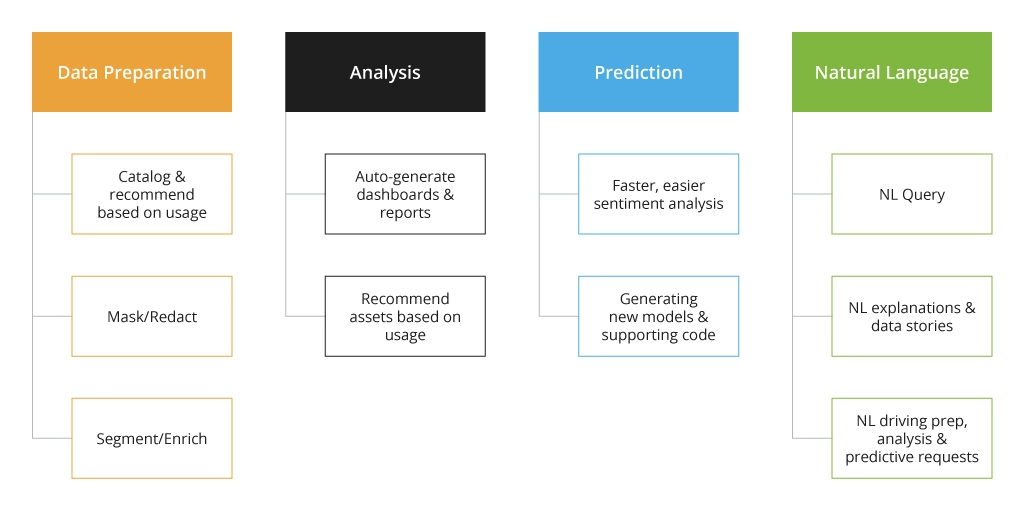

Data Preparation

Generative AI tackles a major pain point in data analysis: data silos. By automating data classification, tagging, anonymization, and segmentation, it streamlines data organization and accessibility. Tools like Microsoft Fabric, integrated with Power BI's Copilot mode, support the potential of generative AI for improving data lineage and governance within data management platforms.

Data Analysis

Data analysis is fundamentally driven by the pursuit of new intel. The problem, however, is that traditional models often inherit the “thinking process” from their creators. For example, your domain experts may have preconceived notions and biases that would be incorporated into the model. Likewise, some users might struggle to formulate the right questions or approach the data from an unconventional angle.

Well-trained generative AI models can uncover new data dimensions and correlations and present them to business users for consideration. Generative AI models can ideate at fast speeds by building thousands of associations within seconds, generating various novel concepts for further human evaluation.

Reporting

Generative AI models are able to extract key findings and generate concise summaries from lengthy reports. These models can leverage analyzed data to automatically generate entire reports, including narrative text that contextualizes the findings. In this way, data analysts shift their focus towards higher-level tasks like interpretation, recommendation, and strategic data storytelling. Who wouldn’t benefit from clear-cut reports that effectively communicate complex findings in a timely manner?

Summarized Insights

The latest generation of Gen AI models are capable of scenario modeling and prescriptive analytics. Using historical data and domain knowledge, these models can assess the feasibility of proposed actions by juggling multiple variables and evaluating different scenarios. Based on these forecasts and feasibility assessments, the models can provide prescriptive recommendations for optimal decision-making.

How to Use Generative AI in Power BI

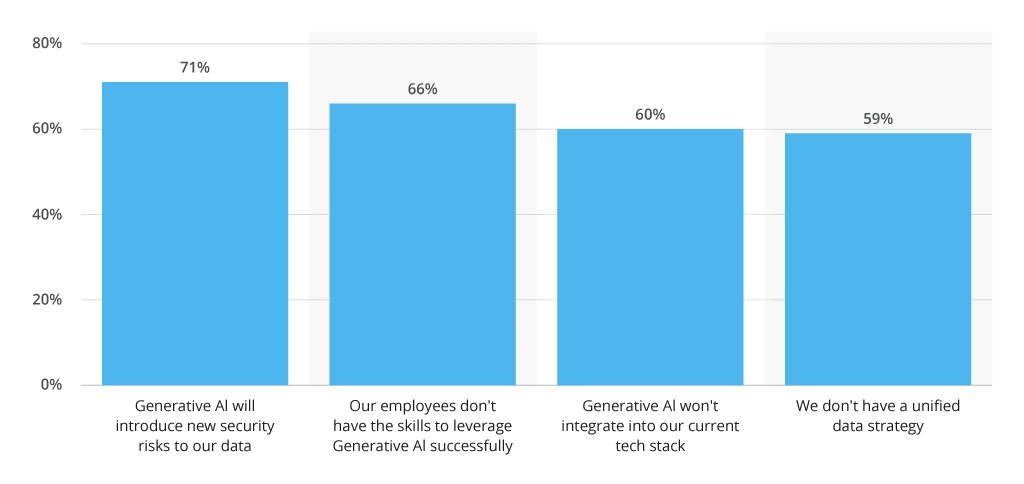

Gen AI can bring game-changing performance gains to data teams. However, despite all the hype and surging interest, many IT leaders are also wary of the potential security and bias risks.

The concerns around cybersecurity and data management are absolutely valid as wider concerns about cultivating a corporate culture of generative AI usage.

To capitalize on the full spectrum of generative AI capabilities, both present and future, organizations need to implement these best practices:

Focus on Data Security

Many commercial generative AI models use the input data for model training purposes, which may not be ideal for privacy-focused industries. Likewise, analysts may include sensitive data in proprietary models by mistake.

To mitigate data privacy and security risks of GenAI usage, organizations need to:

- Establish auditable trails on Gen AI data collection, storage, and processing practices.

- Use data anonymization and/or aggregation to mask sensitive data, which is shared with AI models.

- Implement granular access controls to restrict data access to authorized users or processes.

- Employ secure user authentication methods and role-based authorization for different types of data manipulations.

In other words, organizations need to create a strong data governance process — such that allows establishing full data traceability across the organization.

Each created dataset needs to have a clear owner and a list of users with access/modification permissions. It should also be validated against the organization's compliance rules. Modern solutions like Microsoft Purview and Microsoft Fabrics help establish a clear data ownership structure, paired with scalable, secure data-sharing practices. These tools can help control which data is consumed by GenAI models and prevent accidental disclosures.

Implement Quality Assurance Processes for Developed Models

Generative AI models gain their “knowledge” from their creators. Issues at the design level lead to subpar model performance and biased results. Without extensive quality assurance and model observability, unconscious biases will enter the new models.

Amazon once launched an AI resume rating tool, which unfavorably ranked all female candidates because of their gender. An early version of a Google Photo image recognition algorithm discriminated against black people. In data analytics, it can result in plain wrong calculations or data interpretation, like it happened with Bing AI that presented inaccurate analyses of earnings reports for selected companies.

To avoid biases in AI model design, it is a good practice to:

- Select the best-fit learning model for the selected use case. GenAI models can be built with unsupervised or semi-supervised learning techniques. Each has its pros and cons when it comes to the quality and accuracy of produced outputs.

- Train models on datasets, representative of your organization and industry. The data provided must be comprehensive and balanced. It’s a good idea to train models on proprietary data rather than public datasets.

- Implement model observability to analyze the model’s behavior, data, and performance across its lifecycle. Observability helps detect and investigate drifts in performances and anomalies in a timely fashion.

Data science teams can also take advantage of open-source toolkits for bias detection and mitigation in AI models such as AI Fairness 360 or What-if tool.

Build a Corporate Culture of AI Usage

AI makes certain people uncomfortable for one reason or another. Without a clear understanding of the benefits and use cases of GenAI, adoption will always remain an uphill battle.

At the technology level, organizations will need to establish a better data management infrastructure for continuous dataset creation and self-service access to insights. This step alone requires major transformations both in terms of supporting infrastructure and in supporting processes.

At the processes level, leaders will need to identify the problems, which can be effectively solved with the GenAI. The adoption process should be centered on solving actual business challenges, not adopting expectations that AI will be an end unto itself.

At the people level, your employees will need to be educated on the purpose, benefits constraints, and risks of using the available AI solutions, as well as de-briefed on security and privacy best practices.

Conclusion

Generative AI and Power BI form a powerful duo, bringing a true transformation to data analysis. AI streamlines workflows, unearths hidden insights, and generates clear reports. Power BI's user-friendly interface and Azure integration provide a platform to use these AI capabilities.

Security, model quality, and fostering an AI culture are integral parts of these tech duet. As AI evolves, Power BI will adapt, data teams will get an opportunity to unlock information's full potential.

![Generative AI and Power BI [banner]](https://www.infopulse.com/uploads/media/banner-1920x528-generative-AI-and-Power-BI-a-powerful.webp)

![Data Modelling Power BI [thumbnail]](/uploads/media/thumbnail-280x222-data-modelling-in-power-bi.webp)

![Power BI Plan [thumbnail]](/uploads/media/thumbnail-280x222-a-step-by-step-power-bi_.webp)

![Migration to Power BI [thumbnail]](/uploads/media/thumbnail-280x222-a-guide-to-power-bi-igration-5-stages-to-follow.webp)