Introducing TestOps: a New Approach to Quality Assurance

I’ve been working as a test lead/test automation engineer for three years now. However, while I have been coding almost daily for the past year, I haven’t written a single automated test. To address this matter, I decided to analyze my work activities and discovered that a lot of my work routine is beyond the strict definition of my role. It seemed like there was no specific name for my typical scope of tasks: I set up infrastructure, monitor it, test microservices, run them locally in Docker, which is a regular DevOps’ task list, apart from development.

Seeking for a definition for my current work scope, I immediately associated it with DevOps, with the only difference being testing instead of development. Therefore, it can be called TestOps. This conclusion further raised a series of questions:

- Does this term already exist?

- Does it have a clear definition?

- Is it a role or an approach to work?

My colleagues and I decided to approach the questions systematically:

- First, it was necessary to review the definition of DevOps.

- Then, we had to research the already existing information about TestOps online and formulate our definition.

- After that, we tried to predict the skills and technologies testers would require to work productively over the next five years.

What is DevOps

DevOps (Development Operations) originated as a software development methodology that promoted close interaction between developers and IT professionals (e.g., system and network administrators) in order to reduce development time and improve quality. It is worth noting, however, that we do not consider this an exclusive DevOps objective, but the one for everybody involved in software development, regardless of the role.

For instance, a developer’s work might not be limited to writing code, as developers might also need to instruct on how to launch the software on the server, how to configure it, and where to find the logs. Similarly, an operations engineer might be involved in debugging, as well as setting the server. As pointed out in the Agile Manifesto, individuals and interactions are more important than processes and tools. If you doubt your expertise in something, do not hesitate to consult a colleague, as we all share a common goal.

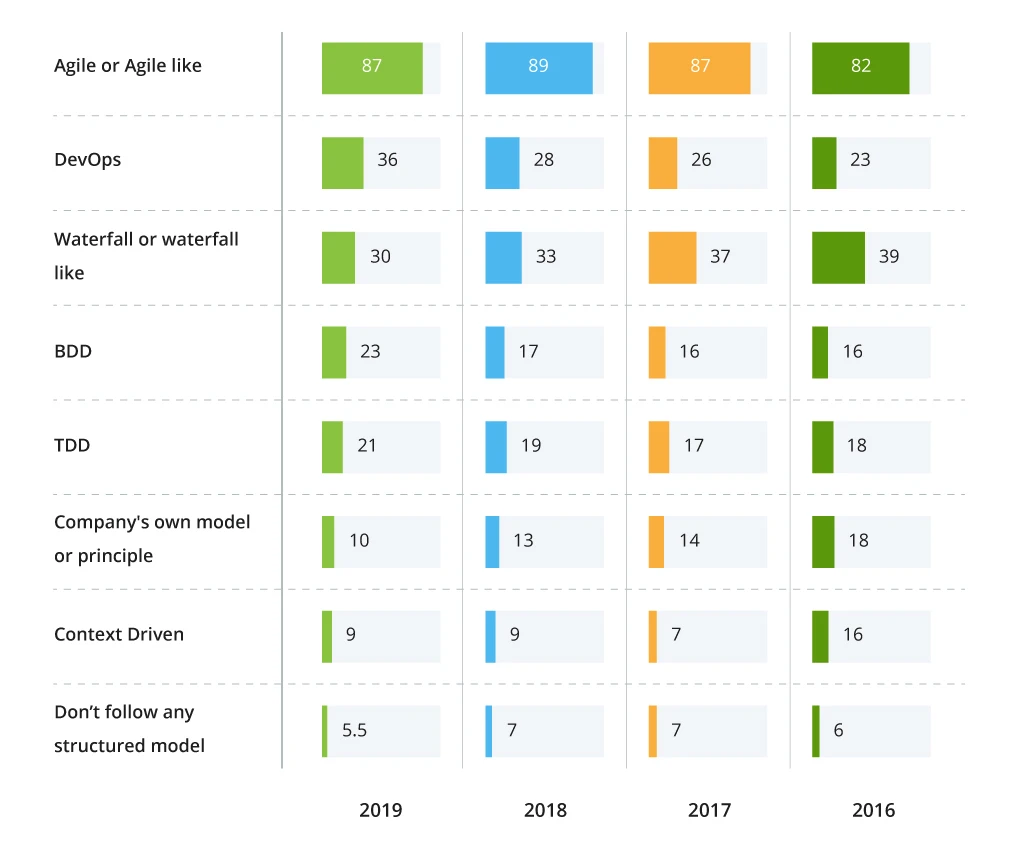

By now, DevOps has evolved from a methodology into a separate role — it is convenient to have a team member who can provide a full cycle of development and operation. At the same time, DevOps is becoming more and more popular as a testing model, reaching 36% in 2019, according to the survey.

Development and Testing Models, %

Why Dev-Test-Ops?

It would only be logical to question whether the introduction of one/multiple universal roles would over-complicate development. The division of labor has been proven to increase efficiency. At this point, it is necessary to analyze the current trends in software development and operation.

Within the past ten years, infrastructure solutions have come a long way. We used to have physical servers where all software, from OS to applications, had to be installed and maintained manually. Today, infrastructure tasks are gradually being delegated to the provider. Instead of buying a server, one can rent it. OS and mail services that require installation are replaced with off-the-shelf solutions. Writing a complete application becomes unnecessary since it is enough to provide the business logic as lambda functions, and delegate all other operations.

This rapid evolution has resulted in the coinage of multiple new terms and abbreviations such as IaaS, PaaS, SaaS, CaaS, and many more that you may not even be familiar with.

The current trend is to simplify the development process and decrease production time. At the same time, infrastructure becomes more complicated. Flexibility is achieved by increasing the number of configuration files, and sometimes it requires a lot of effort to find the causes of problems that users eventually encounter.

Thus, the current situation demands a specialist that, besides knowing testing principles and a market-relevant technology stack (databases, web services, programming language), also understands the general structure of the product.

What is TestOps

Currently, there is no uniform understanding of what TestOps is. The definitions range from very abstract — “a methodology that promotes close interaction between testers and IT experts” — to more specific ones:

- Expansion of the role of a test automation engineer with new responsibilities – to set up and maintain the automated testing environment. This definition implies that to perform automated tests, an engineer packs tests in Docker, launches the software to be tested, runs the tests, collects the results, and provides the report;

- Expansion of software testing types with mandatory load and reliability testing, job enlargement for the tester to include the responsibility to monitor all systems both in test environments and in production;

- A methodology that promotes testing in production only to save resources (space) and time usually spent on creating test data. This allows more time to be spent on analyzing monitoring metrics, as well as making changes that affect small test groups rather than all users at once.

The latter approach, though peculiar as it sounds, has been successfully adopted as part of the development process on a few projects I know.

After considering all of the above, we will try to word our definition.

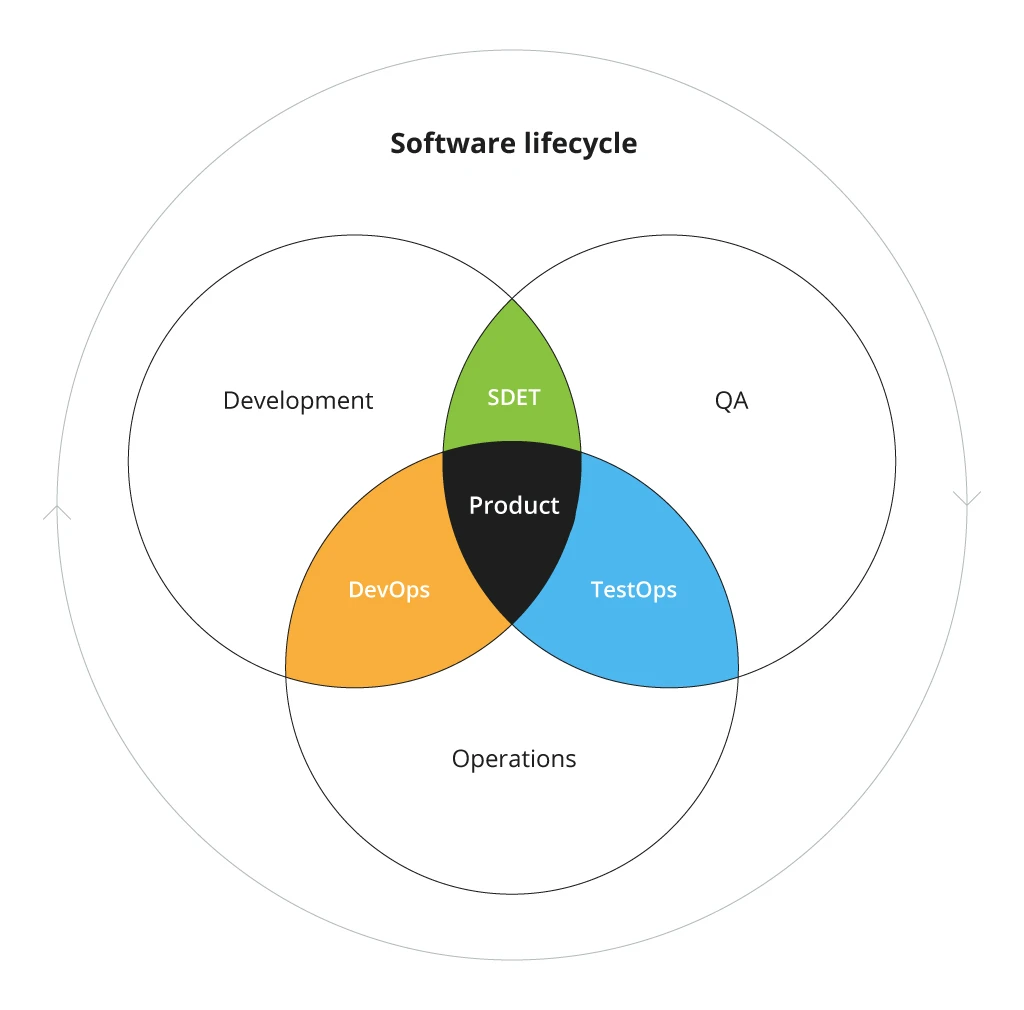

Place of TestOps in a Software Lifecycle

TestOps is a methodology that promotes close collaboration between QA, Dev and Ops, in order to reduce development costs and ensure quality. It takes into account current trends in software development and support, and outlines the following main activities:

Further on, we would like to elaborate on the priority areas of testing listed above.

Test Data

Preparing data for testing has always been a key task for QA specialists. Indicating specific data is what allows for creating a regular test case. With the growing popularity of microservice solutions and the global integration of multiple software, it becomes more and more challenging to gather valid data. To ensure reliable results, the test data must be consistent across all the systems. Therefore, the first stage of testing is to prepare universal data sets for all systems and corresponding mechanisms to update or restore them to their original state.

Example:

Let’s assume that your team works on a large-scale project that consists of several services, each having its own DB, and a few third-party integrations. If the objects of the first service refers to object IDs of the second service and third-party systems, then these specific objects must be created. Moreover, data preparation is not limited to just creating data. If the data to be created during the test is supposed to be unique, you have to make sure that this data is removed beforehand from all services where it may be present.

Functional Testing

According to our statistic, 80% of all tests performed are functional. In our opinion, this figure is accurate and is unlikely to change in the nearest future. The focus, however, shifts from testing individual systems/services functions to testing the system as a whole. Therefore, the testing should be structured traditionally:

- Component testing — each system/service is tested separately. Contract testing can also be included here.

- Integration testing — the interaction of several services. At this stage, we will require a pre-provisioned set of consistent data.

- System testing — E2E testing, i.e., verifying whether the whole system functions as expected and handles the expected errors.

At the component and integration levels, testing automation proves to be very efficient by applying unit tests and tests of web services.

At the integration and system levels, transactional testing is essential, as there are different approaches to ensuring transactionality. At the very least, testers should be aware of these approaches and assure the accuracy and consistency of data integrity in both positive and negative scenarios.

At the system level, it is necessary to test the basic scenarios of system usage, either manually or using automated UI tests.

Example:

While a lot has already been written about testing contracts and web services, transactional testing requires more research, being very peculiar and dependent on implementation. If an object passes through several services, changing its state and properties, it is better to test the system behavior in a scenario where one of the systems malfunctions or mishandles the object. Is it possible to complete processing in such a case? Is it possible to correct the status if it is invalid? Is it possible to cancel all operations and roll the system back to its initial state?

Load and Reliability Testing

There is likely to occur a situation when the behavior of the system, its performance will change under load. As microservices tend to be used for scalability, it is well-advised to always conduct load testing, regardless of whether such requirements were made explicitly. Both individual microservices and the whole system (to identify bottlenecks) can be objects of load testing. The minimum goal is to analyze system performance and provide information to the team and the customer. The maximum is to predict system capabilities depending on the scale and prevent defects associated with high load.

The next point of discussion is reliability, which is closely connected with the system’s efficiency. Our goals are to check if:

- the system is able to handle errors under load;

- microservices can restart in case of an error;

- data integrity is assured;

- asynchronous operations are performed correctly.

The latter is a typical problem a tester may encounter. Asynchronous operations, such as a process that runs once an hour, may not have enough time to complete when dealing with large amounts of data and a heavy load. In such a case, the tester should ensure that the data won’t be lost or processed twice.

It is worth mentioning that there exists a particular methodology and a set of tools, Chaos Engineering, for reliability testing of distributed and microservice systems.

Security

A modern approach to software development implies a vast amount of code being written and released in a short period. In such conditions, we face new security challenges associated with unknown vulnerabilities. Traditional manual security procedures are not able to cope with the growing demand and provide the desired level of informational security.

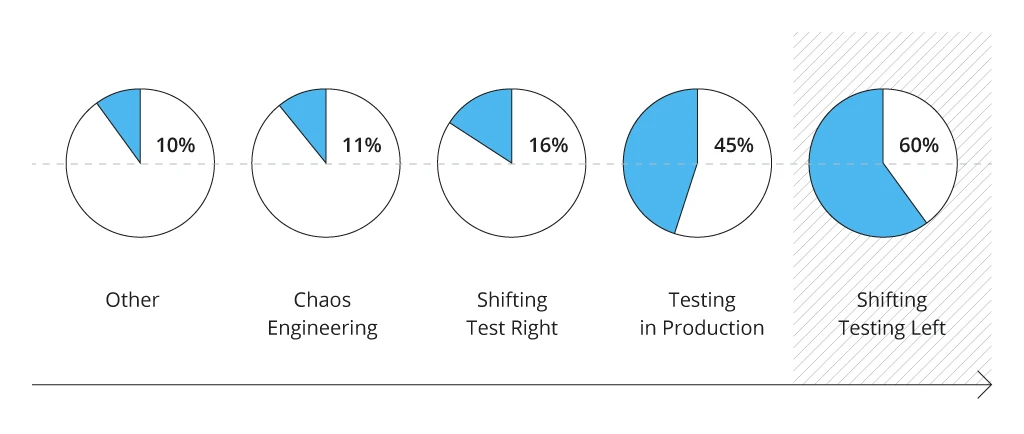

One of the approaches that allow for overcoming these challenges is introducing automated security testing at the early stages of development, instead of the traditional post-release control. Recent surveys outline the tendency of shifting testing closer to the development stage, which would be only beneficial for security automation.

Adoption of Testing Processes or Techniques by Organization

The concept of TestOps boosts the idea of automating security tests through the test cycle. Moreover, security tests can be applied to ensure pipeline security. For example, scanning containers during CD, and verifying system components at CI to ensure system security and stability throughout the whole cycle.

CI/CD setup

One of the vital TestOps markers is the ability to independently configure the process to ensure product quality, and understand existing processes to improve them.

Example:

We have often encountered a situation when DevOps specialists, involved in the project, are not available as they support test and production environments. To accelerate operations, you can manually set up a startup process for automated tests or even add them to an existing pipeline.

TestOps Skills

In this chapter, we would like to bring to discussion the knowledge and skills required today to fulfill the potential role of TestOps:

- Databases – the expertise in SQL and NoSQL;

- Basic understanding of modern data exchange approaches and protocols: REST, SOAP, JMS, WebSocket;

- Knowledge of data formats: XML, JSON, CSV;

- Unix Systems skills;

- Scripting skills in OS: bash, PowerShell;

- Understanding of OS: how software works, what is a service, etc.;

- Coding skills: Python, JS. Scripting languages are preferable, as the ability to write a script on demand often appears more important than the ability to design an application;

- Load Testing Skills. It is not enough to be knowledgeable in tools such as JMeter, Locust, Gatling. The TestOps role requires an understanding of the type of metrics that need to be collected, how to collect them, and under what conditions;

- Automation Skills. It is worth noting that this term has a broader meaning than writing automated tests only: it includes any team’s work automation;

- CI / CD configuration skills (Jenkins, TeamCity).

Recommendations for TestOps

One of the main ways to follow the TestOps methodology is to stay at the cutting edge of evolving technologies. In order to keep up with industry trends, it is necessary to regularly:

- refresh your knowledge;

- read up on new approaches to software development and testing. Tomorrow you may need to test developments that are only being talked about today;

- attend meetups and conferences. There you can encounter ideas that can significantly enrich your concepts of testing and related disciplines;

- share your knowledge with colleagues at conferences and in publications. This appears to be one of the most challenging tasks, as we tend to underestimate the significance and potential interest of our projects. However, as every issue usually has a range of solutions, the one based on your unique experience may be the most applicable.

Afterword

This article is only an informed attempt to define a concept, which has yet to be fully developed in the professional community. It is an attempt to refine this concept, so it is consistent and precise, at least in the context of our team. We are fortunate enough to have a working environment and an out-of-work space that welcomes the discussion of new ideas, so we have already had the opportunity to receive some useful feedback on the definition of TestOps given in this article. We look forward to continuing this lively discussion with the community.

![Power Apps Licensing Guide [thumbnail]](/uploads/media/thumbnail-280x222-power-apps-licensing-guide.webp)

![How to Build Enterprise Software Systems [thumbnail]](/uploads/media/thumbnail-280x222-how-to-build-enterprise-software-systems.webp)

![Super Apps Review [thumbnail]](/uploads/media/thumbnail-280x222-introducing-Super-App-a-Better-Approach-to-All-in-One-Experience.webp)

![ServiceNow and Third-Party Integrations [thumbnail]](/uploads/media/thumbnail-280x222-how-to-integrate-service-now-and-third-party-systems.webp)

![Cloud Native vs. Cloud Agnostic [thumbnail]](/uploads/media/thumbnail-280x222-cloud-agnostic-vs-cloud-native-architecture-which-approach-to-choose.webp)

![DevOps Adoption Challenges [thumbnail]](/uploads/media/thumbnail-280x222-7-devops-challenges-for-efficient-adoption.webp)

![White-label Mobile Banking App [Thumbnail]](/uploads/media/thumbnail-280x222-white-label-mobile-banking-application.webp)

![Mortgages Module Flexcube [Thumbnail]](/uploads/media/thumbnail-280x222-Secrets-of-setting-up-a-mortgage-module-in-Oracle-FlexCube.webp)

![Challenges in Fine-Tuning Computer Vision Models [thumbnail]](/uploads/media/thumbnail-280x222-7-common-pitfalls-of-fine-tuning-computer-vision-models.jpg)