Containers vs VMs: What’s Better for Microservices?

Today, virtual machines (VMs) remain foundational in software engineering. Though newer solutions are challenging VMs' popularity — namely containers. Learn the main differences between containers vs. VMs and when to use each technology.

Containers vs VMs: What Are the Differences?

Containers and virtual machines provide the necessary resources to run an application.

A container is a software package that includes all dependencies — binary code, libraries, and configuration files — required to run a software application. It creates a self-sustained environment allowing you to execute the same code on any device or operating system.

A virtual machine (VM) is a digital emulation of a computational system with predefined CPU, disk, networking parameters, and an operating system. Virtualization enables the sharing of resources like RAM, CPU, disk, or networking among several VMs. Hosted locally or in the cloud, VMs allow you to create an isolated environment to deploy an application.

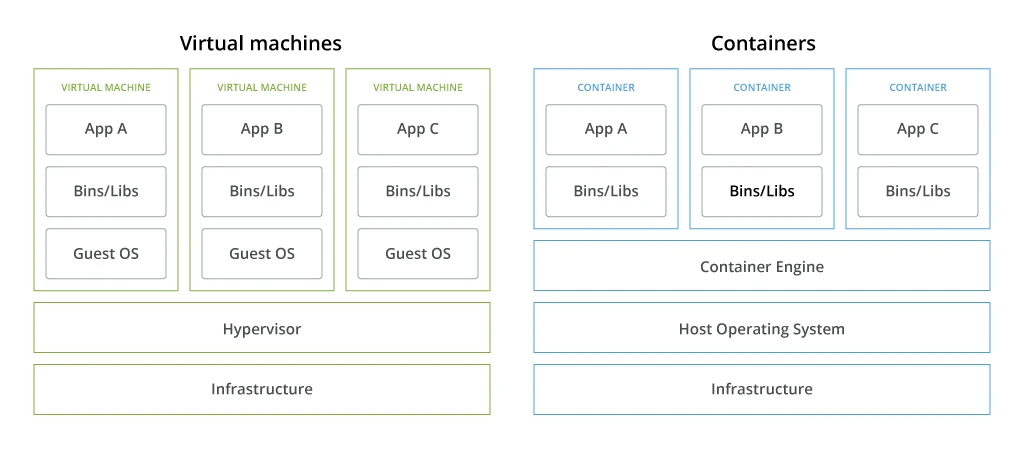

The main difference is that virtual machines replicate an entire machine down to the hardware layers, whereas containers virtualize only software layers above the operating system.

You can create multiple virtual computers from the hardware elements of a single computer (or more frequently — a bare metal server) by using hypervisor software. Hypervisor lets the VM access the CPU, memory, and storage of the host machine (infrastructure). Once deployed, you can configure and update the guest operating system and its applications on a specific VM without affecting other machines.

VMs offer good isolation, but each VM needs its own operating system instance, memory, disk space, and CPU resources, making them more resource-intensive.

Unlike VMs, containers only virtualize the operating system. Each container has everything an application needs to run on any infrastructure. Containers share the host operating system's kernel, but each has isolated processes, a filesystem, and networking stack.

Multiple containers can run on the same host without interference using a container engine — an intermediary that distributes system resources among applications. You can deploy multiple containers on one virtual machine without much performance degradation.

Generally, containers take less time to deploy, consume fewer resources, and can be started or stopped quickly (unlike VMs). All of these characteristics make them a popular choice for microservices architecture.

Containers vs VMs Comparison Table

Containers

Virtual machines

Virtualization

Operating system

Underlying physical infrastructure.

Resources provisioning

The container engine coordinates resource allocation with the OS.

Hypervisor coordinates resource allocation with OS or hardware.

Deployment speed

Fast

Build and modifications are fast because you only generate high-level software.

Moderate

Builds and modifications can take time because you need to regenerate a full-stack system each time.

Resource utilization

Low

Containers are lightweight (under several Mbs) and thus require less storage space for deployment and operations.

Moderate

As VMs require more storage space, you may need to provision extra resources on-premises. Cloud VMs eliminate this constraint.

Isolation

Moderate

Use the same kernel as the host operating system, making them susceptible to security vulnerabilities if the host kernel is compromised.

High

Each VM has a separate kernel, allowing for strong security and isolation between workloads.

Portability

High

Containerized applications can run consistently across different environments and OS.

Moderate

VM image compatibility across environments requires more effort.

Why Containers Work Best for Microservices?

Microservices architecture popularized the idea of modularity. Instead of designing an application as a single codebase, it is organized as a set of specialized, loosely coupled microservices. Each microservice can be written in its tech stack, tested in isolation, and deployed independently as a container without relying on other components of the application.

Containerization promotes this idea of modularity. Developers can use the best technology for the task at hand. If a containerized microservice has an issue, developers can eliminate, fix, and reintegrate it without impacting other microservices.

Using container orchestrator platforms like Kubernetes and Docker Swarm, developers can easily launch, retire, and replace different containerized apps at scale. Airbnb’s team of 1,000 engineers, for example, can concurrently configure and deploy over 250 critical services to Kubernetes (at a frequency of 500 deploys per day), using one tool for automatic Kubernetes service configuration generation.

There are many good reasons to opt for cloud-native architectures — faster time-to-market for new products, lower operating and maintenance costs, reduced downtime, high system scalability, and greater operational resilience. Adoption of DevOps best practices and containerization has helped our client substantially decrease time-to-market and save over €80 million in infrastructure costs.

Container Use Cases

- Cloud-native application development. Thanks to faster loading times and high scalability potential, containers are the go-to choice for cloud-native application development.

- Legacy application modernization. Containerization helps “lift and shift” legacy applications to the cloud. Although application re-factoring facilitates more container environment benefits, such as more efficient resource utilization, higher fault tolerance, and improved scalability.

- Batch jobs. Thanks to fast deployment and easy replication, containers are a popular choice for supporting similar background processes (e.g., ETL jobs for data analytics projects, automatic data backups, or video transcoding among others).

- Feature testing. Developers can test applications inside a docker container and then deploy it. This eliminates the extra step of separately configuring the application for test and deployment environments.

- Multi-cloud strategy. Since containers can easily be moved between different cloud environments as part of your multi-cloud strategy. In this way you can select the best cloud provider for the application and avoid vendor lock-in.

- DevOps. Container technology abstracts infrastructure details and enables easier integration with continuous integration and continuous delivery (CI/CD) pipelines. Developers can build, test, and deploy apps from the same container image, leading to faster release times. Container orchestration platforms also automate app deployment, scaling, monitoring, and management tasks, resulting in fewer failures on the operations side. Learn more about getting started with container technology in DevOps.

When Are Virtual Machines a Better Choice?

Although container technology has been around for quite some time, not every team uses it. A 2023 survey by Dzone found that among adopters of microservices, only 57% of teams have the greater half of their code run in containers. The reasons for not using containers vary.

Some teams are concerned by security implications. Last year, 67% of companies delayed or slowed down application deployment due to container security concerns. Kubernetes clusters have become prime cyber-actors. A recent investigation detected over 350 openly accessible and unprotected projects, of which more than half had been breached and had malware or backdoors deployed. Similar to cloud computing security, container deployments require proactive implementation of security measures for container image scanning, admission controls, and runtime security.

Others are still early into their cloud adoption journey and rely on VMs to support monolithic workloads — a job they actually do well. Complex legacy systems cannot be ‘lifted and shifted’ to containers. A more sustainable approach is the progressive modernization of sub-system components and their refactoring to cloud-native, microservices-based components. Likewise, some resource-heavy workloads may not always fit well into a containerized model.

In cases as above, VMs and containerized applications co-exist in the company’s estates. Developers may also choose to develop microservices-based applications in containers that interact with virtualized applications for other reasons.

Use Cases of VMs

- Extra workload security. VMs have better workload isolation than containers, which reduces the attack surface and contains the potential impact of security to one workload. Therefore, some teams opt for VMs to run untrusted workloads. Or on the contrary — ensure higher security for apps, containing sensitive data.

- Compliance. Virtualization of local servers allows teams to better isolate sensitive workloads from others to meet regulatory requirements on data storage or processing.

- Cross-platform software support. Not all software can be supported as a container, especially third-party tools, acquired from vendors.

- Persistent sandbox environments. VMs can maintain a persistent state with specific software installations, configurations, and other parameters, which you can easily boot and log off without losing data. This makes VMs a popular choice for hosting shared sandbox and testing environments anyone on the team can use.

- IoT testing. Cloud VMs can emulate different hardware architectures and run different kernels, making them an excellent choice for testing different configurations of IoT devices. You can also create snapshots of VM instances and easily revert to previous configurations or create templates to reproduce testing environments for different devices.

- Network slicing. VMs will also continue to play a key role in enabling network slicing in telecom, allowing operators to dynamically create (and delete) isolated virtual networks, tailored to specific use cases.

Finally, you can also use VMs as a benchmarking tool. For instance, you can compare whether a containerized workload indeed consumes fewer resources or handles loads more efficiently to make better choices for migration.

Conclusion

Containers may have become the preferred option for microservices architectures and cloud-native application development, yet VMs still remain a popular choice for other use cases, ranging from cross-platform legacy application support to IoT testing and network slicing. Virtual desktop infrastructure (VDI) is another growing market as companies continue to adopt digital workplace solutions to better support remote and hybrid teams.

![Containers vs VMs for Microservices [banner]](https://www.infopulse.com/uploads/media/banner-1920x528-containers-vs-vms-what’s-better-for-microservices.webp)

![Cloud-Native for Banking [thumbnail]](/uploads/media/cloud-native-solutions-for-banking_280x222.webp)

![Generative AI and Power BI [thumbnail]](/uploads/media/thumbnail-280x222-generative-AI-and-Power-BI-a-powerful.webp)

![Cloud Native vs. Cloud Agnostic [thumbnail]](/uploads/media/thumbnail-280x222-cloud-agnostic-vs-cloud-native-architecture-which-approach-to-choose.webp)

![DevOps Adoption Challenges [thumbnail]](/uploads/media/thumbnail-280x222-7-devops-challenges-for-efficient-adoption.webp)

![Azure Monitor for SAP [thumbnail]](/uploads/media/thumbnail-280x222-azure-monitor-for-sap-solutions-an-overview.webp)

![Mortgages Module Flexcube [Thumbnail]](/uploads/media/thumbnail-280x222-Secrets-of-setting-up-a-mortgage-module-in-Oracle-FlexCube.webp)

![Digital Alignment Drivers [thumbnail]](/uploads/media/thumbnail-280x222-the-top-forces-driving-digital-alignment.webp)

![AWS vs. Azure Cloud Platform [Thumbnail]](/uploads/media/thumbnail-280x222-comparison-of-aws-vs-azure-when-each-cloud-platform-works-best.webp)

![Cloud-Native Maturity Model Assessment [thumbnail]](/uploads/media/thumbnail-280x222-what-Is-the-cloud-native-maturity-model-definition-and-assessment-criteria.webp)