How to Choose the Right Solution for Your Data Analytics

For example, 4 out of 5 marketing executives struggle to translate vast amounts of customer data into data-driven decisions. This article explores a strategic approach to choosing the right data analytics solution for your organization's unique needs. Here are four key steps to get started with your data:

Step 1: Define Your Data Strategy

Having a clear data strategy is important for any business that handles big data, which applies to most today’s organizations. It’s what makes a difference between gathering data and turning it into a valuable asset that helps you make smart choices and grow your business.

Just picking the correct analytics tools isn't a data strategy. It's a comprehensive approach that also covers the tools needed for data gathering, storage, and analysis as well as data governance policies and compliance requirements. A thorough familiarity with this ecosystem will help you to select a data analytics solution that fits in well with your overall data goals. Studies show this approach pays off — data-driven decisions can prompt significant revenue growth, according to 75% of respondents.

To determine the data strategy, you should:

- Align data analytics with overall business goals. Don't let data analytics become an isolated initiative. Instead, ensure it seamlessly integrates with your overarching business development strategy. Clearly define the goals that will help you identify specific data points and analytics tools that will yield the most impactful results. Some of them can be increased customer retention, optimized supply chains, or personalized marketing efforts. Also, avoid "quick and cheap" solutions. Opting for a basic solution solely based on cost and the speed of implementation can lead to scalability issues and maintenance challenges down the road.

- Embrace a long-term vision. Ad-hoc fixes might seem appealing, but they often fall short in the long run. Think beyond immediate needs and envision your organization's data-driven future. Do you plan to expand into new markets, develop innovative products, or build a real-time data ecosystem? Having this long-term vision allows you to choose a data platform with enough scalability and flexibility to adapt to your evolving needs. While addressing current requirements is important, neglecting long-term considerations can lead to costly rework and limitations in the future.

- Consider industry trends and disruptions. The data landscape is a dynamic field constantly reshaped by emerging technologies and shifting customer behaviors. So, become a keen observer of trends within your industry. For example, enabling omnichannel experiences helps retailers prioritize data analytics that optimizes both online and offline customer journeys. While in banking, data analytics platforms become central for managing a loan process. Read more in Data Analytics for Banking and Fintech.

- Develop a data governance framework. Data is only valuable if it's accurate, consistent, and secure. Establish a data governance framework to ensure data quality and maintain data integrity throughout its lifecycle. The framework may include:

- data ownership

- access controls

- data security protocols.

What if you do not have a data strategy in place

- Farm A: Operating without a fully realized data strategy, Farm A relies on traditional methods to predict crop yields. This approach, even with its merits, is susceptible to unpredictable weather patterns and leads to inconsistent yields and potential losses. Even if they collect some data, they miss out on all the insights hidden in their data that could significantly improve their operations.

- Farm B: With a comprehensive data-driven approach, Farm B leverages sensor data to monitor soil moisture and nutrient levels, allowing for precise fertilizer application. Additionally, they can analyze historical weather patterns to predict potential droughts and adjust irrigation schedules accordingly. This results in optimized resource utilization and increased crop yield.

Case in point. MHP, a major agricultural player in Ukraine, sought to digitize and automate its extensive sowing campaign spanning 6,000 fields across 11 regions. Infopulse delivered an Azure-based data platform that could be further scaled upon as the customer’s needs evolved.

Step 2: Adapt Advanced Analytics Solution to Your Business Processes

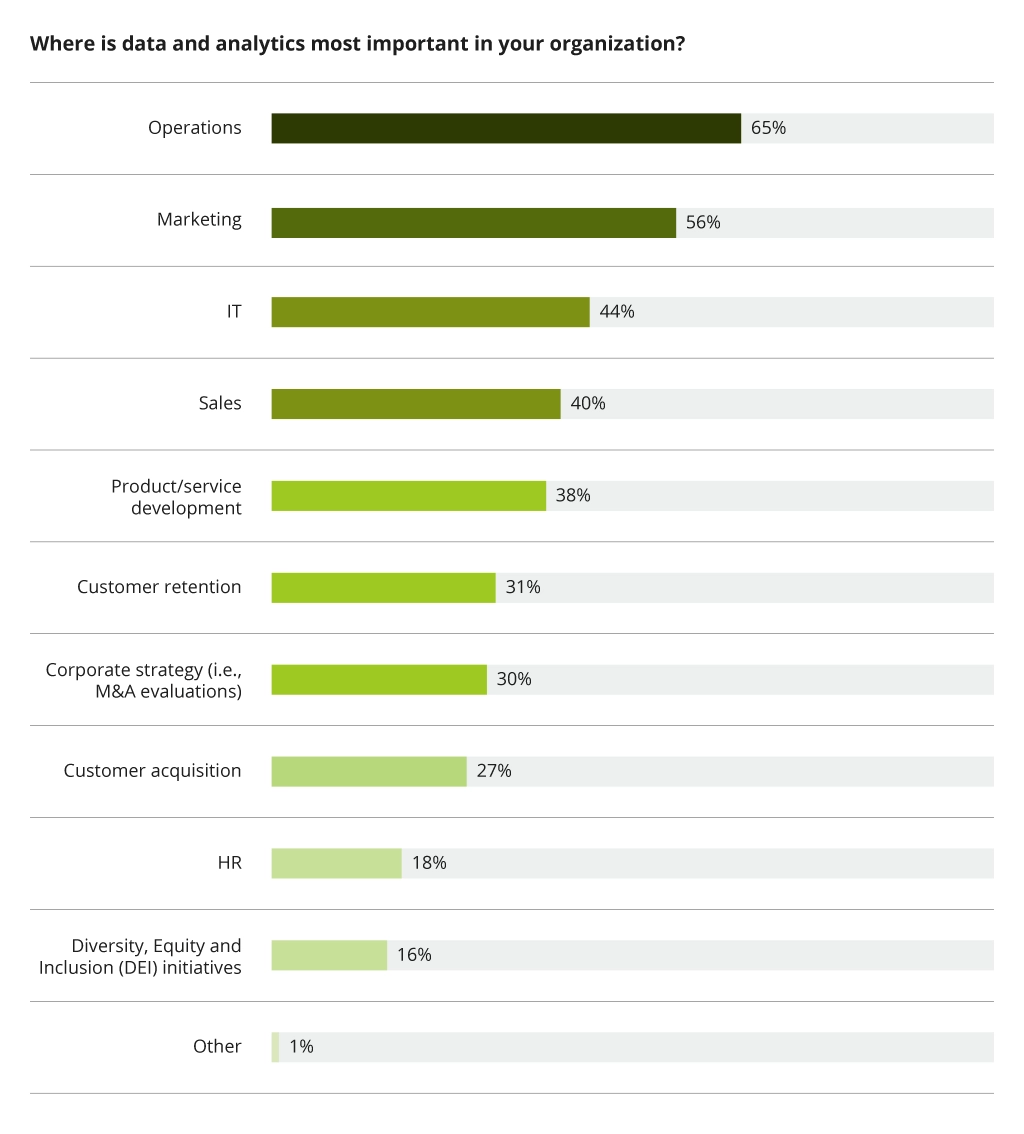

This step delves into the heart of the matter – how your IT infrastructure impacts and interacts with your business processes. Organizations should review which areas are the most important to use data analytics in.

The core question at this stage is whether your organization desires to optimize its business processes through the implementation of IT solutions, namely data analytics. The answer, in most cases, is a resounding yes. However, matching and adapting existing processes to fit a new solution is a task of its own.

Here's how you can achieve such digital alignment:

- Business process mapping. The first step is to thoroughly map your existing business processes and identify key data points used at each stage. This comprehensive exercise reveals data dependencies and potential bottlenecks that could hinder data flow.

- IT infrastructure assessment. Conduct a detailed assessment of your current IT infrastructure. Analyze the capabilities of your existing systems (e.g., CRM, ERP, etc.) and their capacity to handle large data volumes needed for analytics. This evaluation identifies any gaps or limitations in your technological foundation.

- Data integration strategy. With mapped processes and assessed infrastructure at hand, develop a data integration strategy. It outlines how data will be collected, transformed, and transferred between various systems. Consider utilizing data integration tools like ETL (Extract, Transform, Load) processes to ensure data consistency and its high quality for analysis.

- Solution implementation and customization. Now, you can put your data analytics solution into action. Customize it to your business specifics, industry, and the way you get your data. The solution should perfectly align with your current setup and processes. For example, tailor the solution's reports, dashboards, and data pipelines to meet your unique business requirements.

- User adoption and training. Finally, successful digital alignment hinges on user adoption and training. Equip your teams with the necessary skills to utilize the chosen data analytics platform and translate insights into actionable business decisions. This may involve training programs, workshops, and ongoing user support.

Step 3: Consider Performance Requirements

Having defined the project's purpose and needs, clarify the performance requirements and data availability of the solution. This seemingly technical detail impacts the chosen architecture and ultimately influences your project success.

- Defining performance benchmarks. Clearly define your performance expectations. Do you require lightning-fast processing for real-time data analysis or might daily updates be sufficient? Real-time data necessitates a robust architecture with high bandwidth to handle the constant influx of information. Example: Imagine a financial institution that needs to establish real-time monitoring and analysis for fraudulent transactions to prevent financial losses. In such scenarios, maintaining low latency (minimal delay) becomes a priority.

- Assessing data availability. Data availability considerations go hand-in-hand with performance requirements. Determine what data needs to be stored and updated, for how long, and how often. This decision hinges on your industry and particular tasks. Most organizations implement real-time data capture for distinct scenarios – identifying and reacting to unexpected events. Example: A manufacturing plant uses network monitoring equipment with sensors. Real-time data allows for the immediate detection of equipment malfunctions, minimizing downtime and production losses. However, not all captured real-time data requires permanent storage. A "filtering" mechanism can be employed to identify and retain only relevant information for further analysis while discarding extraneous data to reduce storage costs and optimize system performance.

- Streamline real-time data. Real-time data systems often leverage specialized "stream processing" tools. These tools go beyond simple filtering. Stream processing instead analyzes the continuous flow of data in real-time defining specific patterns or events as they occur in the data stream. Example: The stream processing tool can be used as part of a security system to monitor network traffic for potential cyberattacks. It would continuously analyze incoming data packets, searching for suspicious activity patterns within the data stream itself. This approach allows for immediate detection and reaction to threats, minimizing vulnerabilities. Additionally, by only processing relevant data points within the stream, it saves storage and reduces processing overhead.

Step 4: Evaluating Data Analytics Solutions

Selecting a million-dollar platform for some companies may lead to overpaying for excessive features they won't utilize. This is why understanding your data analytics requirements is paramount. Focus on your data volume and growth projections to choose an appropriate scalable solution.

Here's a breakdown of key features to consider along with an overview of popular vendors.

1. Features and Functionality

Data Visualization

Tools like Tableau with its drag-and-drop interface and ability to handle massive datasets, and Microsoft Power BI allow users to translate complex data patterns into clear and insightful visualizations.

Machine Learning (ML)

To switch to advanced analytics powered by AI, start using Google Cloud AI Platform, Azure Machine Learning, or Google Cloud AI Platform depending on your current tech stack.

- Azure Machine Learning brings a guided experience for building and deploying ML models. It includes pre-built components and templates for common tasks, along with data cleaning and transformation tools.

- Amazon SageMaker leverages ML algorithms to help uncover hidden insights and make data-driven predictions.

- Google Cloud AI Platform offers pre-built AI models for tasks like image recognition and natural language processing.

Case in point. Infopulse opted for Google Cloud Platform (GCP) to revamp a German media giant's data infrastructure. Leveraging GCP, Infopulse developed a flexible and scalable data platform meeting the client's performance and security needs. With machine learning algorithms and recommendation engines, viewers enjoy personalized content, which results in boosted engagement.

Statistical Analysis

If you are looking for quantitative analysis tools, pay attention to traditional players like SAS and IBM SPSS. SAS, a leader in advanced analytics, provides a comprehensive suite of statistical tools that can handle complex data manipulations and modeling techniques. IBM SPSS, on the other hand, is known for its user-friendly interface and strong data cleaning capabilities, making it a popular choice for those seeking a balance between power and ease of use. These solutions cater to a wide range of statistical needs, from hypothesis testing and regression analysis to advanced modeling techniques.

2. Scalability and Flexibility

Cloud-Based Solutions

Cloud-based data analytics systems make sense for businesses looking for flexibility and scalability. Cloud giants like Microsoft Azure and Amazon Web Services (AWS) offer a rich ecosystem of data analytics services. Both provide seamless integration with data visualization solutions (Power BI, Amazon QuickSight), handle explosive data growth, and can scale up or down on demand.

AWS vs. Azure: Which one Is the Best for Your Needs?

3. Security

Data security is always a priority — so, look for platforms that prioritize robust security features like encryption at rest and in transit, multi-factor authentication, and granular access controls to safeguard sensitive information. Here are some additional security considerations:

- Data residency. Understand where your data will be stored and processed by the vendor. For organizations with strict data privacy regulations, choosing a vendor that offers data residency in specific regions might be crucial.

- Compliance certifications. Look for vendors who comply with relevant industry security standards like HIPAA, PCI DSS, and GDPR. These certifications demonstrate the vendor's commitment to data security best practices.

- Threat detection and monitoring. Choose a platform that offers continuous threat detection and monitoring capabilities to identify and mitigate potential security breaches.

4. Cost

Data analytics solutions vary significantly in cost, influenced by features, scalability capabilities, deployment options (cloud-based versus on-premises), and the level of support required. Below is a breakdown of key cost factors to consider:

Licensing Costs

These can be perpetual (one-time fee) or subscription-based (annual or monthly fees). Perpetual licenses may seem cheaper upfront, but they don't typically include ongoing upgrades and support. Subscription models offer greater flexibility and scalability, but the costs can add up over time.

Implementation Costs

Most cloud vendors offer implementation services to help you set up and configure the data analytics solution. These services can be a significant cost factor, especially for complex deployments. Consider your in-house technical expertise or team up with an IT services vendor who can provide the needed level of customization at a reasonable cost.

Ongoing Support

Ongoing support plans provide access to technical assistance, bug fixes, and software updates. The cost of support varies depending on the vendor and the level of service required. Carefully assess your needs and the cost of ongoing support against the potential benefits of self-sufficiency.

Data Storage Costs

Cloud-based solutions typically charge for data storage based on the data volume. These costs can add up quickly, especially for organizations with large datasets. Carefully estimate your data storage needs and choose a pricing model that aligns with your usage patterns. Some vendors offer tiered storage options with lower costs for infrequently accessed data.

Given the complexities involved, most organizations seek consultants to select and implement a perfect data analytics solution. Such consulting offers a broader perspective, considering industry trends and your organization's plans. Through assessing your current state and future goals, they can recommend solutions that are not just technically sound, but also strategically aligned with your long-term vision.

Open-Source Options

For budget-conscious companies, open-source solutions like Apache Spark and Hadoop provide significant flexibility and customization options. However, implementing these solutions requires a higher level of technical expertise within your team.

Conclusion

Success can be yours with the right solution — all it takes is careful consideration of your organization's unique requirements and long-term ambitions. Cost optimization is an ongoing process, but with the methods provided, you should be able to identify the best solution within your budgetary constraints.

If you are looking for further consultancy or more insightful examples, Infopulse BI experts can consult you on a wider choice of data analytics solutions and how they have implemented them for our clients around the globe.

![Generative AI and Power BI [thumbnail]](/uploads/media/thumbnail-280x222-generative-AI-and-Power-BI-a-powerful.webp)

![Data Analytics and AI Use Cases in Finance [Thumbnail]](/uploads/media/thumbnail-280x222-combining-data-analytics-and-ai-in-finance-benefits-and-use-cases.webp)

![Data Analytics Use Cases in Banking [thumbnail]](/uploads/media/thumbnail-280x222-data-platform-for-banking.webp)

![Big Data Use Cases in Agriculture [thumbnail]](/uploads/media/thumbnail-280x222-key-agro-challenges-solved-by-advanced-data-analytics.webp)

![Databricks Benefits and Capabilities [thumbnail]](/uploads/media/thumbnail-280x222-what-is-databricks-and-why-you-need-it.webp)

![Data Storage Security [thumbnail]](/uploads/media/thumbnail-280x222-data-storage-security.webp)

![Challenges of Big Data Analytics [thumbnail]](/uploads/media/thumbnail-280x222-challenges-to-consider-before.webp)

![Data Modelling Power BI [thumbnail]](/uploads/media/thumbnail-280x222-data-modelling-in-power-bi.webp)

![Big Data Platform on AWS [thumbnail]](/uploads/media/thumbnail-280x222-aws-data-platform-20230227.webp)

![Big Data Platform on Google Cloud Platform [thumbnail]](/uploads/media/thumbnail-280x222-why-your-big-data-platform-should-reside-on-google-cloud.webp)

![Benefits of Applying for Big Data Consulting Services [tumbnail]](/uploads/media/thumbnail-280x222-5-benefits-of-collaborating-with-a-big-data-consulting-company.webp)

![Data Platforms on Azure [thumbnail]](/uploads/media/thumbnail-280x222-building-data-platforms-microsoft-azure.webp)

![Cloud Data Platforms [thumbnail]](/uploads/media/how-a-cloud-data-platform-helps-unveil-the-outmost-value-of-data-280x222.webp)

![Cloud Data Platforms [thumbnail]](/uploads/media/the-many-faces-of-cloud-cata-platforms-280x222.webp)