Developing OSS on Hybrid Cloud Infrastructure: Technical Best Practices

However, telecoms do not rush to migrate OSS and BSS systems to the cloud. As Omdia reports the common rationale for hesitating are as follows:

- 61% have limited IT expertise to deliver such projects

- 51% have limited financial budgets allocated towards such initiatives

- 41% lack a clear-cut organization strategy for pursuing the transformation

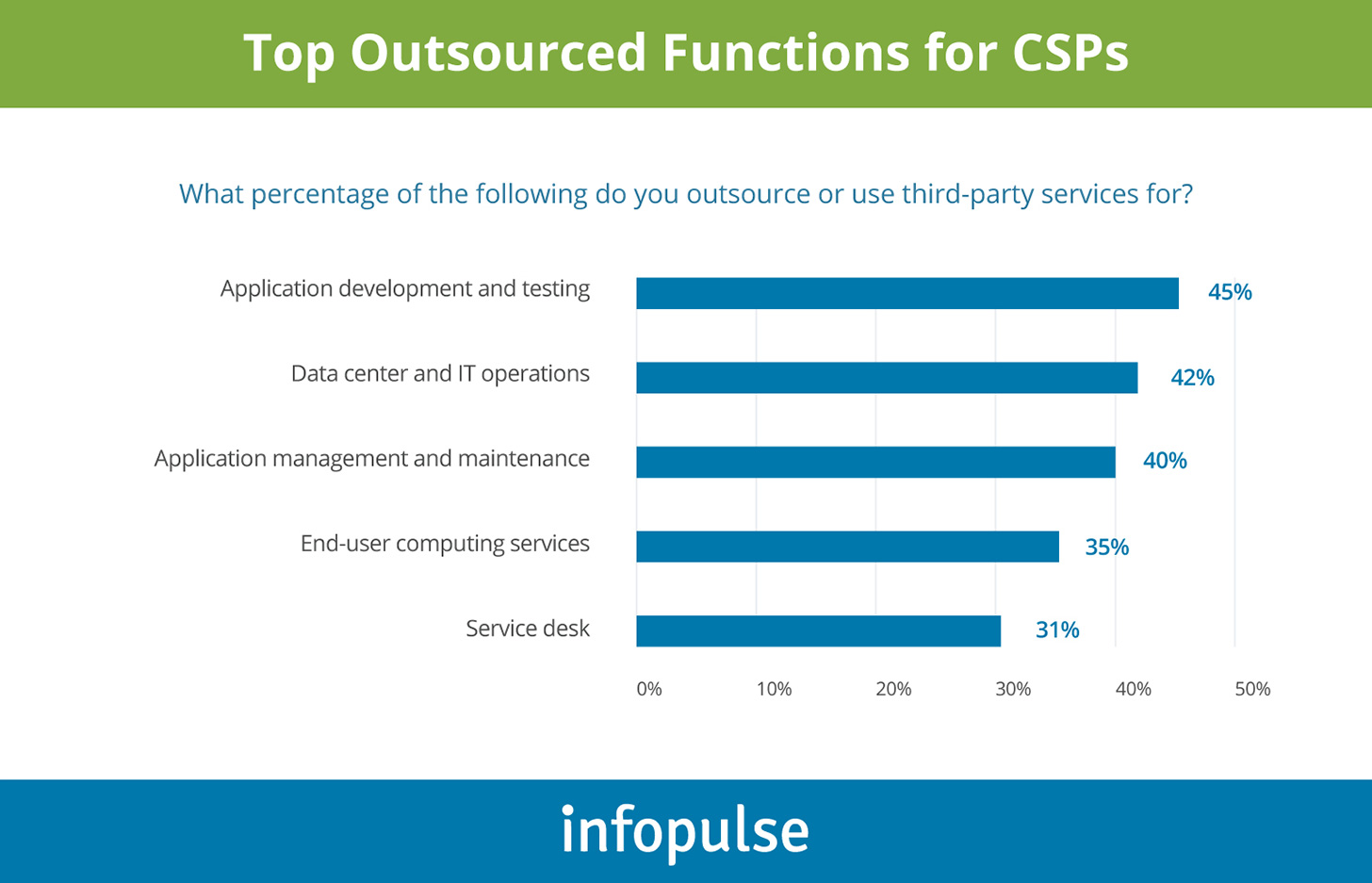

The above are valid barriers. However, as the survey further suggests, many telecoms have found an effective way to overcome them — using an IT outsourcing partner to facilitate the execution.

To show the efficiency and the outcomes of such partnerships, Infopulse telecom team decided to share how we approached hybrid cloud OSS development on Microsoft Azure for one of our clients.

Hybrid Cloud Operational Support System (OSS) Setup for a Telecom Client

Our client, a subsidiary of a multinational telecommunication service company, approached us with a new project — development of an Azure-based OSS system for roaming deals management, plus integration and migration of several legacy on-premises components.

The team was seeking an automated, scalable solution for ensuring effective deal management with over 1,000 operators globally. Prior to this, the company did not have an end-to-end OSS tool to execute all the associated workflows. A good number of actions required manual inputs. Thus, they were also looking for a business-user-friendly solution that could remove manual inputs, provide more accessible self-service BI analytics, as well as conduct anti-fraud analysis.

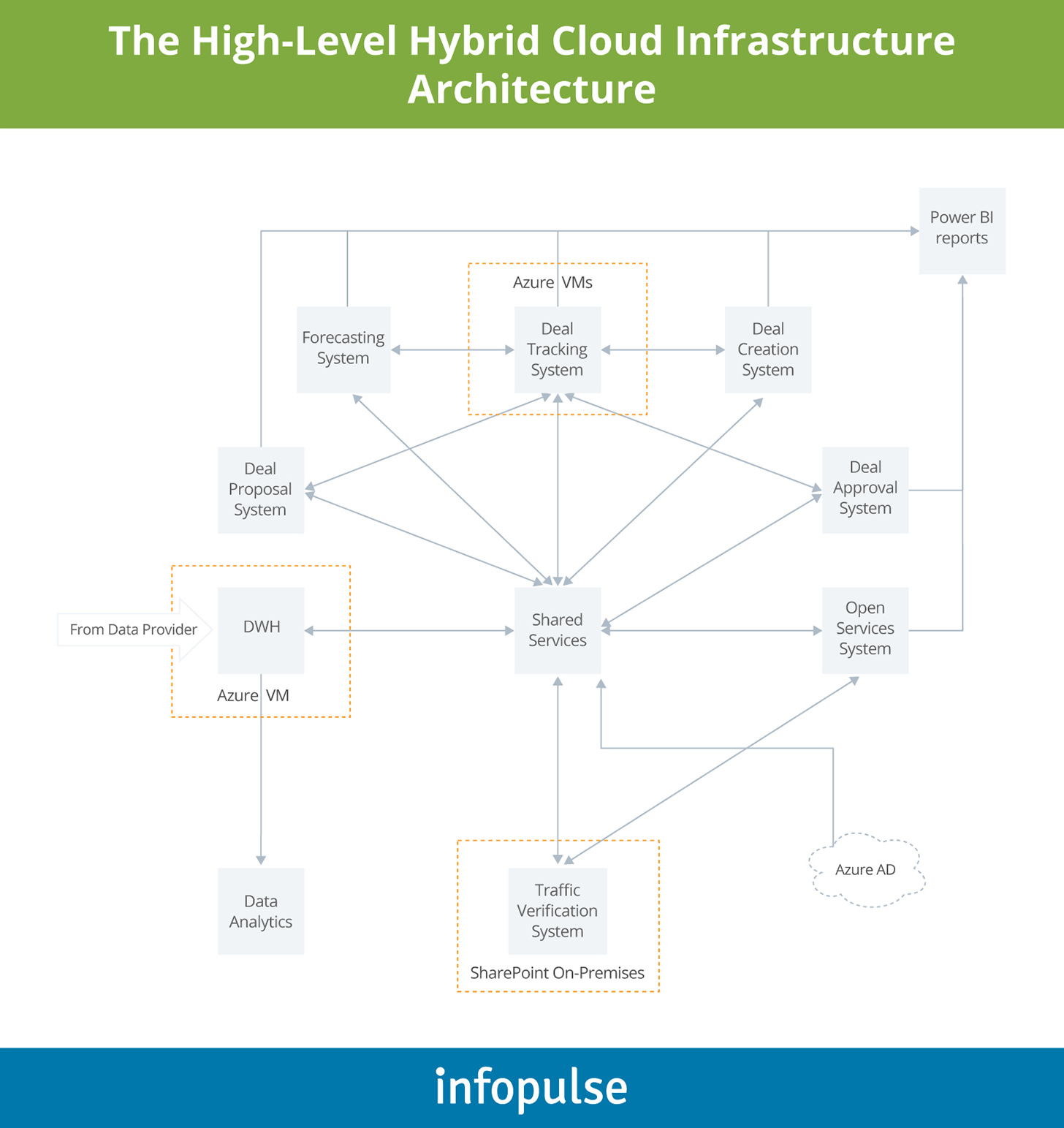

Our team of telecom software architects suggested the following hybrid cloud infrastructure to power the new OSS system.

The focal point of the system is a “Shared Services” unit, featuring a collection of services for:

- General data access permissions

- Role and permissions management

- Reference data management

This unit effectively served as “router” for further transmitting data, authorizations, and permissions to access different subsystems, designed as separate microservices and a connected application, hosted on-premises.

Below we further discuss why we opted to use certain Azure services and products for powering the product and ensuring its high performance and scalability.

Select Your Prime Contender for Migration and Spin Your Requirements from It

When we started working on the project, the client already had a legacy Deal Tracking system, operated locally. This OSS tool, however, only covered a fraction of required workflows and some actions had to be completed manually by users.

Moreover, the application lacked modern OSS functionality such as deal volume forecasting systems, proposal management systems, and anti-fraud management functionality, among others. Our team analysed the provided requirements and suggested de-coupling certain functionality from the legacy application and building Azure-native sub-applications for automating the entire lifecycle of roaming deal management.

The legacy deal management application was migrated from on-premises to Azure VM. Doing so also allowed the teams to access the tool directly from their workstations, rather than logging to a remote server via an RTP client.

Migrating On-Premises Data Storage to Cloud Improves Both Latency and Security

Most of the corporate data was previously hosted at an on-premises location (a data center). From a compliance perspective, that was an optimal solution. However, as we developed native business applications on Azure, accessing data from an on-premises data center resulted in high latency.

To improve availability, we have set up a secure data warehouse, using Microsoft Azure SQL Database, and migrated all data to Azure VMs. This, in turn, has allowed us to improve availability without sacrificing any security controls.

As you know, infrastructure security in telecom is a crucial requirement, especially when it comes to data exchanges and privacy configurations. To ensure compliance, we incorporated Azure AD (Active Directory). Then used this service to configure how different apps could exchange data. For example, the Forecasts app could only exchange data with Deal Tracker (and vice versa). Such intentional limitation prevents unnecessary data exposure and staves off the chance of intentional or accidental data breaches.

Flexible Analytics is the Main Element of the Best Operation Support Systems (OSS)

All the incoming roaming data, housed at the cloud DHW, could be effectively assessed by an array of analytics tools.

First, we have added a set of analytics tools for line of business users to analyze all the incoming traffic using Azure Analytics. This allowed them to manually and automatically verify specific data, check inconsistencies, and apply different filters to massage data from different angles and generate custom reports.

Separately, we allowed easy data export from every system to Power BI — a robust self-service analytics tool that telecom managers could use to review deal volumes and statuses, as well as setup custom reporting dashboards based on their needs.

Select Databases That Best Fit Your Needs

While we chose to use Azure SQL for the main DWH, we have also used Microsoft Azure Cosmos DBs (+ SQL API and Mongo API) for Deal Approval and Open Services Systems. In this case, we opted for Cosmos DB because the data structure within these apps required a non-relational database.

Cosmos DB is a relatively new solution. But it does come with some strong advantages, namely:

- 99.99% guarantee for data availability, throughput, consistency, and low latency for single-region and multi-region accounts.

- Flexible pricing model that bills clients, tracks storage and throughput independently.

- Multi-API connectivity, which allows you to easily build integrations with other apps and databases (as we did).

- Tunable consistency levels (five in total) allowing you to implement truly granular controls.

So, if you are also looking for a scalable, high-performing non-SQL database, Cosmos DB is a strong contender.

Cloud Migration Does Not Have to Be All or Nothing

As you may have noticed from the diagram, one of the subsystems — a Traffic Verification System, built in Microsoft SharePoint — was left on-premises. Migrating this application to Azure made sense technologically. However, this app had the most legacy code. Refactoring it for the newly-set cloud environment and purchasing a cloud-based SharePoint license did not justify the perceived value from completing the migration. Therefore, the client decided to postpone the migration for now.

Cloud migration carries a host of quantifiable benefits and indirect gains. However, not every legacy product makes a good candidate for modernization. Thus, as you prepare your business case for cloud migration, it always makes sense to start with the “lower-hanging fruits”— such applications that could demonstrate short-term cloud ROI and help justify the investment in more complex projects.

CI/CD is Essential for Running Effective Software Delivery Cadence in Hybrid Clouds

Given the high degree of connectivity and dependencies between different subsystems, having a strong CI/CD process, backed by DevOps best practices, was essential. Since we were working with both legacy and new products, our goal was to prevent “old” apps from tampering with newly built cloud services.

To prevent any chance of operational disruptions and ensure business continuity, our team generated separate code branches for each new feature. For each branch, in turn, a suitable environment was auto-generated. Respectively, all quality assurance (QA) activities were isolated and did not impact the systems in the production environment. To contain costs, each environment was automatically taken down post-testing.

To manage all the configurations we used Azure DevOps. The entire SDLC was set up around specific triggers. Once new code landed in the correct branch, a new build was auto-triggered. As part of CI, we also ran unit tests for new builds to ensure that new features had not interfered with the legacy ones.

After receiving the approval, the new code moved into the testing environment. After passing all quality checks, the code once again was reviewed and approved. Then the code was passed on to the “release candidate” environment, and went through user acceptance testing (UAT). Finally, the new feature moved to the production environment, where it was once again reviewed and prepared for the release.

While the description may seem lengthy, in reality, most of these steps were highly automated, using Azure DevOps pipelines. Plus, all the releases happened automatically with no disruptions or downtime.

To Conclude

From the business perspective, the telecom sector is forced to evolve fast under several stressors at once — the demand for better service levels, 5G transition, rising traffic volumes, and evolving security threats.

However, it is impossible to resolve those issues without adopting modern OSS architecture and addressing inherent issues within legacy IT infrastructure. The challenge for the telecom industry today is not only identifying underperforming applications in the portfolio, but also understanding how the current IT environment supports (rather than hinders) the emerging business priorities.

Contact Infopulse team to receive a further consultation on how you should approach the development of secure, high-performing, and cost-effective OSS solutions for your company.

About the Authors

Roman Mazanka is a Senior DevOps Engineer with 6+ years of experience in information technology. He has a history of successfully delivered projects across domains with a primary focus on telecom, banking, and agriculture industries. In Infopulse, Roman is involved in a cloud OSS development for one of our customers.

Connect with Roman on LinkedIn.

Anatolii Omelchenko is an experienced software engineer with a demonstrated history of working in the information technology and services industry. During over 6+ years, he has contributed to numerous successful projects for banking and telecom. Anatolii’s tech stack includes C#, TypeScript, MongoDB, SQL, and Microsoft Azure.

Connect with Anatolii on LinkedIn.

![Expanding NOC into Service Monitoring [thumbnail]](/uploads/media/280x222-best-practices-of-expanding-telecom-noc.webp)

![Cloud-Native for Banking [thumbnail]](/uploads/media/cloud-native-solutions-for-banking_280x222.webp)

![Generative AI and Power BI [thumbnail]](/uploads/media/thumbnail-280x222-generative-AI-and-Power-BI-a-powerful.webp)

![Data Governance in Healthcare [thumbnail]](/uploads/media/blog-post-data-governance-in-healthcare_280x222.webp)

![Super Apps Review [thumbnail]](/uploads/media/thumbnail-280x222-introducing-Super-App-a-Better-Approach-to-All-in-One-Experience.webp)

![SAP Service Insight [thumbnail]](/uploads/media/Service Insight-Infopulse-SAP-Vendor-280x222.webp)

![5G Network Holes [Thumbnail]](/uploads/media/280x222-how-to-detect-and-predict-5g-network-coverage-holes.webp)

![Carbon Management Challenges and Solutions [thumbnail]](/uploads/media/thumbnail-280x222-carbon-management-3-challenges-and-solutions-to-prepare-for-a-sustainable-future.webp)

![Automated Machine Data Collection for Manufacturing [Thumbnail]](/uploads/media/thumbnail-280x222-how-to-set-up-automated-machine-data-collection-for-manufacturing.webp)