Microsoft Copilot for Security: How Generative AI Accelerates Security Operations

Yet, the further explosion of data and growing IT infrastructure footprint, coupled with ongoing security talent shortages, undermines the companies’ security efforts.

Generative AI applications like Microsoft Copilot have emerged as possible solutions, promising significant workflow improvements through intelligent automation.

In October 2023, Microsoft unveiled Microsoft Security Copilot — a generative AI assistant for cybersecurity teams, trained to facilitate incident investigation, risk exposure analysis, and threat mitigation scenarios.

Microsoft Copilot Overview

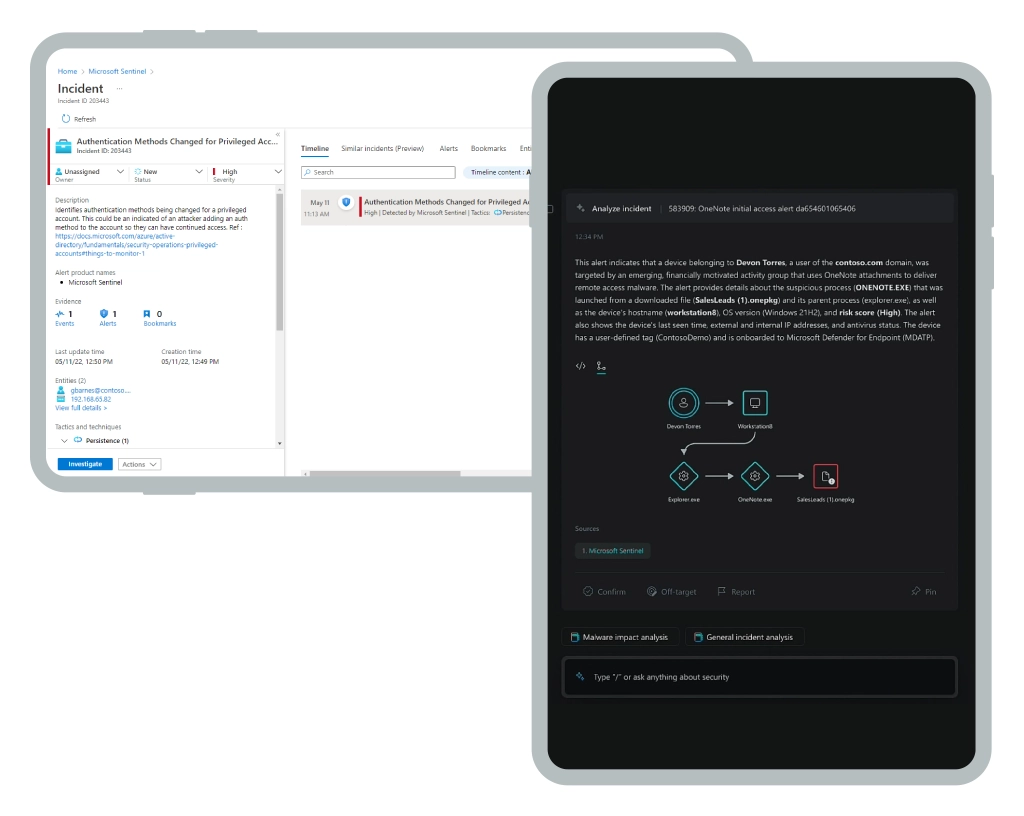

Traditionally, SOC teams rely on an array of threat analytics dashboards (e.g., Microsoft Defender) and SIEM/SOAR tools (e.g., Microsoft Sentinel) to access the latest security intel and investigate abnormal activity.

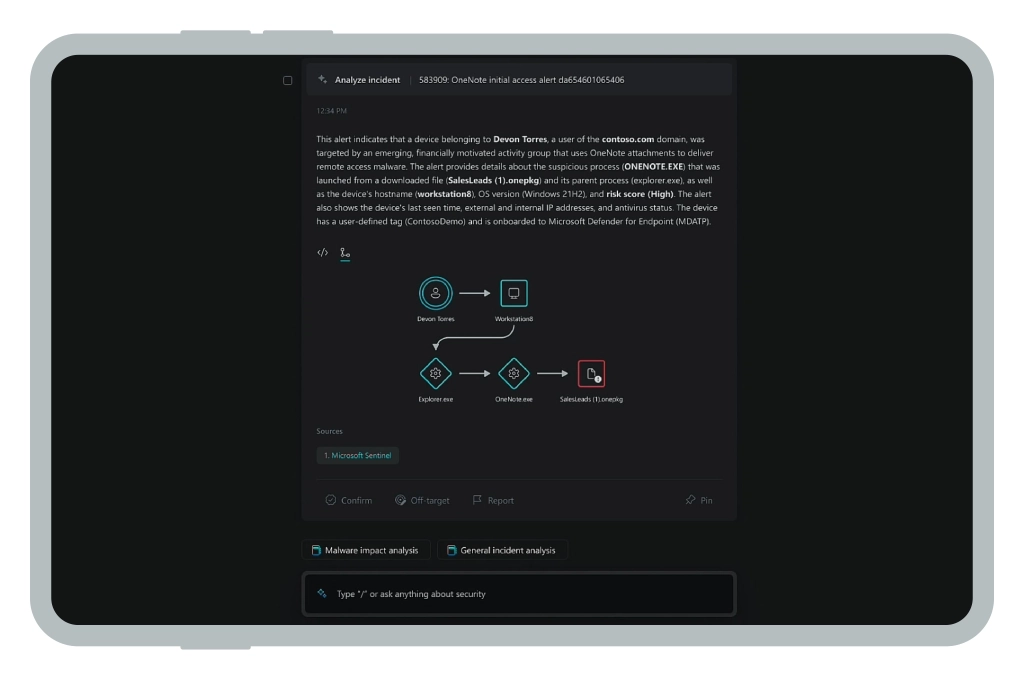

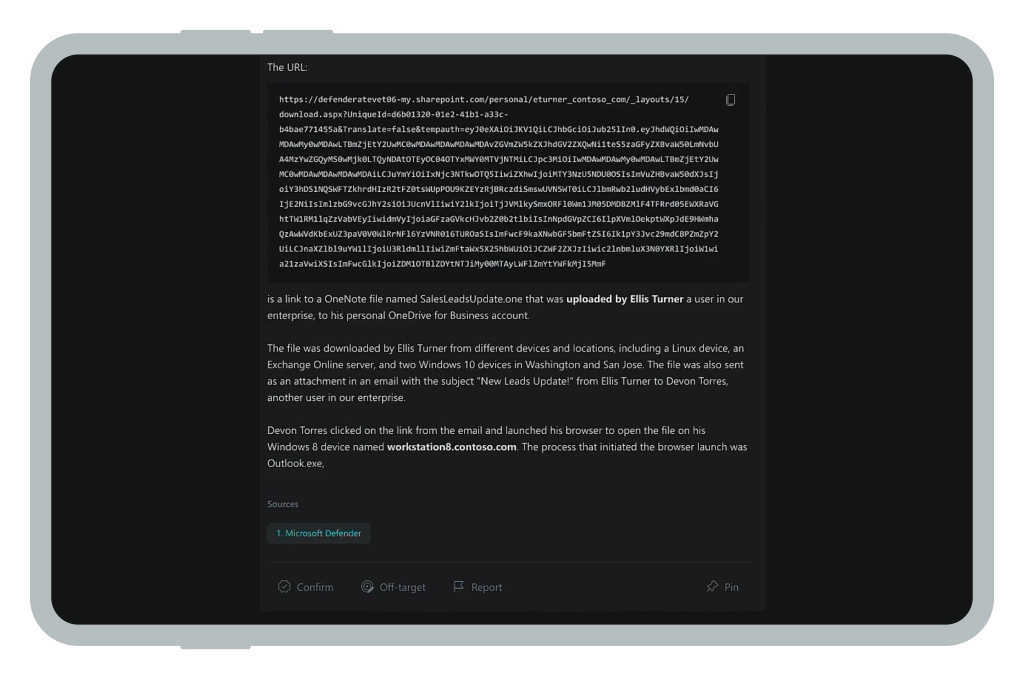

Microsoft Copilot adds a conversational interface to these technologies, allowing analysts to ask open-ended questions about different threat types, reverse-engineer malicious code, or locate possible vulnerabilities in corporate infrastructure. Instead of writing custom scripts or performing manual analysis, cyber specialists can interact with the underlying tech stack via natural language. Think of this as all the times you’ve asked ChatGPT to summarize a 15-page business report.

Traditional Security Dashboard vs Microsoft Copilot for Security

Security Copilot is just one of the many “companions” that Microsoft released last year, such as embedded Copilot for GitHub, Microsoft 365, Power BI, and Viva — and Sales Copilot for operationalizing data from customer relationship management (CRM) applications in Dynamics 365 and Power Apps. Copilot in Microsoft Fabric is available in preview and Windows Copilot is coming out mid-2024.

How Does Microsoft Security Copilot Work

Microsoft Copilot is akin to an operating system. According to an ex-Microsoft employee, the system has four conceptual layers, supporting all of the Microsoft Copilot features:

- Hardware: High-performance cloud computing infrastructure, powered by the latest GPU from NVIDIA.

- Foundation models: Microsoft uses a combination of OpenAI models (GPT-4, DALL-E, Whisper), as well as possibly some open-source LLMs like BERT, Dolly, and LLaMa. All of these models are stored in a model registry in Azure AI Studio. For security data analysis, Microsoft also likely uses custom neural networks.

- Orchestration: This layer handles prompt engineering and performs quality control. Like ChatGPT, Microsoft uses the Retrieval Augmented Generation (RAG) approach, a plugin model that retrieves data from connected sources to augment the context output. This allows Copilots to provide personalized responses, based on the enterprise data (in case of security — latest analytics from Microsoft Sentinel).

- User interface: A chat-like interface for interacting with the underlying model(s).

In the case of Microsoft Security Copilot, the model collects security telemetry data from connected security tools such as:

- Microsoft Sentinel

- Microsoft Defender XDR

- Microsoft Intune

- Microsoft Defender Threat Intelligence

- Microsoft Purview

- Microsoft Defender for Cloud

- Microsoft Defender External Attack Surface Management

- Microsoft Etna.

Based on the information obtained, it generates insights and guidance, specific to your organization. Think of this as if ChatGPT was trained on your music playlist and always recommended songs you like.

Benefits of Microsoft Security Copilot

Similar to other generative AI apps, Microsoft Security Copilot reduces the time spent on information look-up. Early users report up to 40% time reduction on core security operation tasks. Other advantages of Microsoft Security Copilot include:

Workforce Augmentation

Cybersecurity skill shortages remain rampant, with 59% of teams being understaffed. The remaining analysts, in turn, have to shoulder more workloads and operate on the verge of burnout. According to a recent survey, 90% of UK cyber-sec specialists continue to check corporate communication even while on vacation, and 32% have their lives interrupted by work every night.

Burnout is dangerous because it leads to mistakes. In fact, 83% of IT security professionals admit that someone on their teams committed errors due to burnouts that led to security breaches.

Microsoft Security Copilot brings a greater degree of automation to the standard security processes, which, in turn, minimizes error rates. With more menial tasks streamlined, your much happier employees can have proper time off and focus on higher-value tasks during work hours. For example, shift the focus from firefighting to developing new elements of your cybersecurity program.

Reduced Alert Fatigue

With an extended security perimeter, cybersecurity professionals are exposed to a wider range of alerts. The majority (44%) spend over 20 hours per week on managing alerts and 9% deal with over a million security alerts each day.

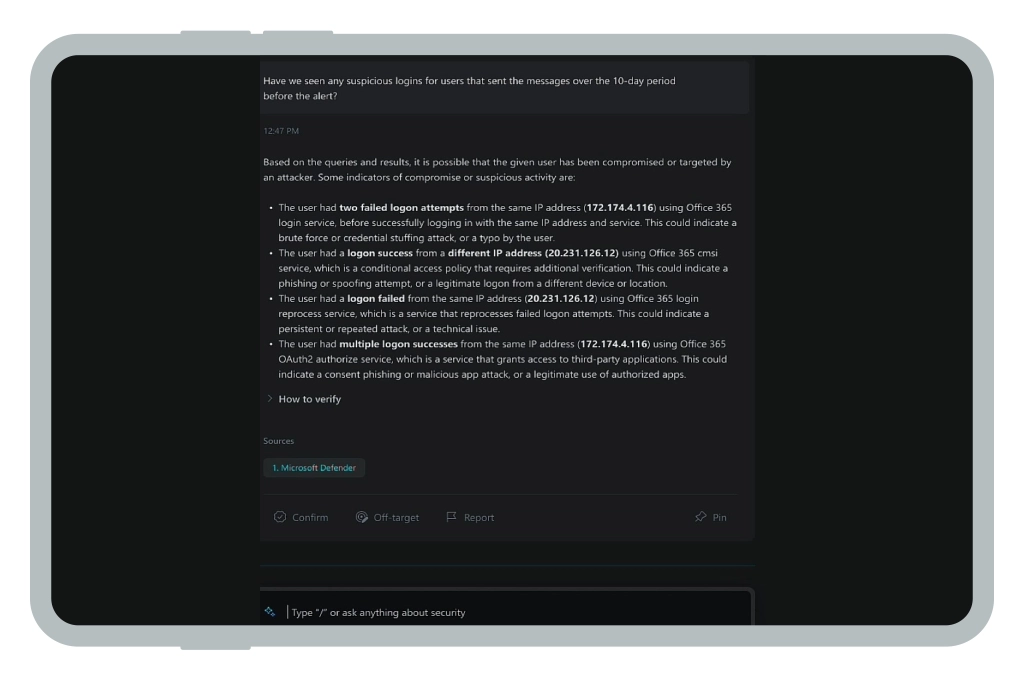

Microsoft Security Copilot enriches security alerts with contextual recommendations and remediation scenarios, which speeds up the review and triage process. Also, it helps to review your security reporting metrics and ensure that you are tracking truly useful parameters.

Improved Data Protection

Data protection is the pillar of enterprise security. Without understanding your sensitive data landscape (storage location, access and sharing permissions, etc), it is impossible to maintain a strong security posture.

Thanks to native integration with Microsoft Purview, Copilot can be used to quickly analyze sensitive data, create summaries of user- or data-level risks, and quickly identify oversights in data management practices and policies.

Automated Identity Management

Given that three-quarters of data breaches involve a human element, access and identity management (IAM) practices should never be overlooked. All Microsoft business products and Azure services come with adaptive controls for assigning user roles, managing users' privileges, and implementing secure authentication methods.

Microsoft Copilot helps security analysts discover overprivileged access, generate new policies, and evaluate licensing options across different solutions. The tool also helps generate detailed security assessments of incidents that involve specific identities.

Proactive Threat Hunting

Threat hunting is one of the key SOC use cases, adopted by larger organizations. The reality is that most cyber threats outpace organizations’ security efforts.

Cyber threat hunting involves a proactive investigation of signs of abnormal behaviors, malicious activities, and potential vulnerabilities in the company’s systems, networks, and infrastructure.

A threat-hunting strategy, unlike traditional cybersecurity measures, identifies and neutralizes threats before they can cause significant damage. Security Copilot is a helpful companion for such tasks as it can resurface relevant data from connected sources, locate weak configurations or policies, alert about outdated patches, and model possible attack chains.

Workforce Development

The cybersecurity industry needs more fresh talent — but overwhelmed analysts rarely have time to properly train new hires. Microsoft Security Copilot helps IT security professionals at every level to learn more about the essential security best practices through high-level summary reports and also to master some new tricks through conversational interactions.

More Natural Language Processing (NLP) Use Cases for Cybersecurity

During the 2023 Microsoft Build Developers Conference, the company also introduced a new development framework for building custom AI apps. Similar to OpenAI, Microsoft now allows developers to use its platform to create custom Copilots that work across ChatGPT, Bing, Dynamics 365 Copilot, Microsoft 365 Copilot, and Windows Copilot.

Microsoft plans to allow anyone to create, test, and deploy their own gen AI applications, which augment the capabilities of existing Microsoft Copilots. For example, to better monitor edge devices, you should give Microsoft Security Copilot access to IoT data. To do so, you need to connect Microsoft Security Copilot to respective devices and ensure that only relevant data gets collected, indexed, and streamlined to Copilot.

That’s where plugins come into play. “A plugin is about how you, the copilot developer, give your copilot or an AI system the ability to have capabilities that it’s not manifesting right now and to connect it to data and connect it to systems that you’re building”, Microsoft says. In other words: It’s a standardized framework for making new data accessible to Copilot applications, with controls on how it can be used. This is a particularly exciting Microsoft Copilot feature as it can expand the number of security operations AI use cases the technology can support.

Effectively, this framework would expand the scope of NLP use cases for cybersecurity. Respectively, we expect more software vendors (and organizations with an established SOC function) to experiment to extend their tools with new capabilities:

- Provide remediation recommendations, based on company playbooks. Gen AI apps can be trained on the company’s documents and policies (on top of vendor-recommended best practices) to better guide analysts in their work.

- Automation of a wider range of security tasks. The best cybersecurity tools already include “self-healing” capabilities — automatic rectification of an identified vulnerability. Gen AI security apps may soon include a one-click execution of such actions for routine incidents.

- Reverse engineering of novel threats. Microsoft Security Copilot can already analyze the provided malware code and provide a summary of its key characteristics and exploit patterns. Effectively, the algorithms are pre-trained on a large data set of known threats and can thus recognize them. The next generation of tools may also have the capability to reverse engineer novel (aka previously unseen) exploits, providing analysts with richer intel.

- Greater support of behavior analytics. Security Copilot can already recognize some abnormal user behaviors, but it still has some limitations. The newer systems will likely get extra capabilities to process a wider range of security signals and behavior patterns, indicative of internal snooping or external intrusion.

The Future of Generative AI in Cybersecurity

Generative AI has a high potential to transform many routine security operations. However, the technology is still at the very early stage of maturity. Remember how GPT-3 initially felt novel, only to be soon overshined by the much more robust GPT-4 model? Similarly, Microsoft Security Copilot will likely get even further updates that would make it more reliable and adaptive to a wider range of use cases.

It is also worth reminding that Gen AI outputs are only as good as the initial prompts. When your team is asking the wrong questions, the system will produce gibberish results. Likewise, Gen AI apps can hallucinate when they reach the limit of their capabilities. Hence, it is always worth double-checking the recommendations for accuracy and validity.

Last, but not least: Generative AI apps mostly act as a new interface for interacting with the underlying technology. Such solutions are ineffective when your company does not have a properly implemented and configured security stack for infrastructure, application, and network monitoring — an area where Infopulse cybersecurity team is always happy to help.

![Data Storage Security [thumbnail]](/uploads/media/thumbnail-280x222-data-storage-security.webp)

![DevSecOps on Azure vs on AWS [thumbnail]](/uploads/media/thumbnail-280x222-dev-sec-ops-on-aws-vs-azure-vs-on-prem_1.webp)

![Introduction to DevSecOps [thumbnail]](/uploads/media/introduction-to-DevSecOps-280x222.webp)